Executive summary

Agent Skills are an emerging concept introduced by Anthropic that effectively embed reusable playbooks that guide agents in task execution.

Skills use the progressive disclosure concept to solve the existing tools context window congestion issue by only preloading the Skill’s description and then dynamically loading the Skill itself while it is needed, saving the context window.

Although AI agents gain new abilities with Skills, the Skills layer also exposes a new class of attack surface.

This blog post categorizes the top 10 critical threats related to the Skills layer that security practitioners should consider while building and evaluating agents with Skills.

The Skills ecosystem is still evolving and requires proactive security design, clear trust boundaries, scoped permissions, and continuous validation to prevent it from becoming the next systemic weak point in agentic architectures.

Recently, Anthropic introduced Agent Skills into the agentic world and reshaped how we think about building AI agents.

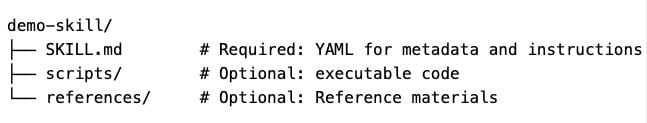

In simple terms, a Skill is a reusable package of specialized knowledge and procedures that an agent can invoke on demand. Skills follow a standard format in which a directory contains a SKILL.md file with metadata and detailed instructions, and may also bundle scripts, templates, and other assets that support the capability.

You can think of a Skill as a comprehensive onboarding guide written by an expert for a new employee. It captures best practices, structured knowledge, and operational instructions all in one place. But unlike a static file, a Skill is designed to be evaluated and used by the agent at runtime.

The just-in-time context architecture

The key innovation is in how context is managed and when content is provided. Skills use a design pattern called progressive disclosure to manage context and token use efficiently.

Instead of loading all instructions and resources for every Skill up front, which would consume tokens and reduce quality, Skills are structured to have three layers.

Discovery. At start-up, only the metadata (name and description) is preloaded into the agent’s context. This gives the agent a minimal index of capabilities without flooding the context window.

Activation. When the agent detects that a user task matches a Skill’s description or context, it then loads the full SKILL.md instructions.

Execution. Additional capabilities, including scripts, templates, and other resources, are accessed only when needed. This allows detailed content to be used without permanently occupying valuable context tokens.

This layered approach keeps agents responsive, allows many Skills to be available without token bloat, and lets the agent load context just in time for the task at hand.

Skills vs MCP and subagents

Tools provide new capabilities, while Skills provide structured expertise; that is, a tool enables actions that an agent cannot perform at all, whereas a Skill improves how well it performs tasks it could attempt on its own.

Unlike many Model Context Protocol (MCP) setups that preload every tool schema into the context window, consuming tens of thousands of tokens upfront and increasing the risk of tool conflicts, a Skill loads lightweight metadata first and adds detailed instructions only when needed, preserving context for the active task.

Compared with subagents, which encapsulate their own prompts, tools, and state for autonomous or parallel workflows, Skills are lighter and more composable, allowing multiple agents to share procedural knowledge without maintaining separate agent instances. In practice, Skills suit small, focused, reusable functions, while subagents are better for broader expertise, independent state, or parallel execution.

The top 10 threats related to Agent Skills

As with other emerging agentic capabilities, Skills dramatically expand the attack surface. The recent OpenClaw incident made this tangible: The most downloaded Skill in the marketplace was in fact malware, designed to exfiltrate SSH keys, crypto wallets, and browser cookies, and then establish a reverse shell to an attacker-controlled server.

The security risks introduced by the rapid spread of Skills fall into 10 key areas. By categorizing these 10 threats, our goal is to provide security teams with a practical foundation for threat modeling Skill-enabled agents. Each category can be translated into concrete review questions, architectural controls, and runtime safeguards.

SKILL01: Indirect prompt injection via Skill

SKILL02: Skill selection manipulation

SKILL03: Metadata and instructions mismatch

SKILL04: Excessive Skill authority and permission scope

SKILL05: Skill supply chain manipulation

SKILL06: Unexpected code execution via helper scripts

SKILL07: Skill-to-Skill data flow abuse

SKILL08: Memory abuse through skills

SKILL09: Skill injection via dynamic discovery

SKILL10: Skill-induced resource and financial exhaustion

SKILL01: Indirect prompt injection via Skill

Natural language in SKILL.md is injected directly into the model’s context and treated as authoritative input. Hidden instructions embedded in Skills, adversarial phrasing framed as best practice, and conditional instructions that activate on certain inputs can directly shape the model’s reasoning and decisions.

SKILL02: Skill selection manipulation

Agents choose Skills based on their description. Attackers can create Skills with misleading names, descriptions crafted to match common intents, or overbroad capability descriptions to increase the chance that they are selected in inappropriate use cases.

SKILL03: Metadata and instructions mismatch

A mismatch occurs when there is a gap between what is declared in the Skill description and the actual runtime behavior defined by the instructions. Because agents use progressive disclosure, only the Skill metadata is preloaded with the ability to decide in runtime if it should call the Skill, while the actual Skill execution logic is opaque to the agent until the Skill is invoked.

SKILL04: Excessive Skill authority and permission scope

Skills often leverage capabilities through broad APIs or shared credentials, which expand the agent’s authority surface. Skills that request generic permissions, reuse shared credentials, or inherit agent-wide authority increase the likelihood of privilege boundary bypass. When permissions are not tightly scoped for each Skill, each integrated Skill becomes another path for unintended access or abuse.

SKILL05: Skill supply chain manipulation

Skill packages are sourced from external registries or repositories where the trust boundary extends beyond the agent runtime and into the developer ecosystem. Attackers can exploit this by using typosquatting to mimic legitimate Skill names. Attackers can also introduce malicious dependency chains with external URLs in the Skill instructions, which might fundamentally change Skill’s behavior in runtime.

SKILL06: Unexpected code execution via helper scripts

Skills are a packaged folder of instructions and resources, which may include supplementary Python, JavaScript, or Shell scripts. Although scripts typically run in a sandboxed environment, under certain circumstances they can access sensitive information (such as .env files or .ssh folders) or perform limited data exfiltration and actions on behalf of the agent account.

SKILL07: Skill-to-Skill data flow abuse

Many agents automatically pass the output of one Skill directly into another Skill, forming implicit trust chains between capabilities. This expands the attack surface because the boundary is no longer just between the agent and external input. When the output of one Skill is consumed by another without strict validation, policy enforcement, or context isolation, structured or adversarial content can propagate across the execution graph.

SKILL08: Memory abuse through Skills

Skills with write access to long-term memory stores become an indirect mechanism for persistent manipulation. Instead of a one-time attack, a malicious actor can use these Skills to rewrite the agent's long-term goals, corrupt stored task context, or leave hidden instructions for future tasks. Injected content framed as factual context, user preferences, or task history may turn the agent's own memory into a tool for the attacker, making the threat last much longer than a single session.

SKILL09: Skill injection via dynamic discovery

Although dynamic Skill discovery is still experimental, it allows agents to identify missing capabilities and install new Skills at runtime, pushing this threat beyond what can be detected at load time.

SKILL10: Skill-induced resource and financial exhaustion

Attackers can trigger Skills that perform computationally expensive tasks, high-latency API calls, or premium-tier model queries to cause denial of service (DoS) or "wallet exhaustion." This is particularly effective when Skills have built-in retry logic or loops that can be exploited to keep the agent busy and unresponsive to legitimate users.

Conclusion

The Skills ecosystem is still evolving, yet it addresses context window congestion and introduces a new design pattern in which agents gain structured expertise, not just tool access.

While the OWASP Agentic Top 10 covers broad agent risks, this blog post focused on the distinct security implications of the Skills layer as an extension of the base agentic framework.

The distinct security implications of the Skills layer creates elevated risk for early adopters, as security best practices are still maturing, practitioners are only beginning to understand emerging threat patterns, and defensive solutions are still evolving.

Practitioners should treat Skills as a distinct trust boundary, apply least-privilege access to each Skill, scan Skills before use and validate Skill description against runtime behavior, restrict Skills to allowlisted registries, isolate Skills memory, structure Skill-to-Skill data flows, and require human confirmation for high-impact actions.

Adopting these guidelines early allows organizations to benefit from the Agent Skills paradigm while reducing the likelihood that this new layer becomes an unmanaged expansion of the agentic attack surface.

Tags