There is a good chance you might have heard the term Model Context Protocol, or MCP, in the context of AI systems recently. But we’re finding that many developers haven’t had the time to learn about what it does or how it fits into agentic workflows. This blog post provides an introduction into what MCP is and how it can be useful.

Briefly, the Model Context Protocol (MCP), introduced in 2024, is a standardized, open source way for large language models (LLMs) to access external tools, providing AI applications with an ecosystem to support the LLM. These tools include databases, programs on the user's computer, APIs of cloud providers, etc. Such support is critical for enabling agentic AI.

Hundreds of tools are already accessible via MCP.

MCP servers provide standardized support to LLMs

At a basic level, LLMs interface via natural language rather than programmatic APIs — LLMs are provided instructions in text and return responses also in text. This is obvious with chatbots, but it is also true in general.

Code snippets and other structured information can be included in the input text, and LLMs can provide code snippets back in the output text — but LLMs cannot directly run code without additional support.

MCP provides standardized support for running code without any special capabilities within the LLM itself. With MCP, an LLM is informed, as part of the prompt or context, that certain MCP tools (i.e., code) have been enabled and that these tools can be invoked for the LLM if the LLM requests a tool using specific structured formatting in its output text.

When an LLM outputs text in the correct format, this output is not typically sent to a human— instead, an MCP client program intercepts the response, automatically runs the requested tool, and provides the output of the tool back to the LLM so that the LLM can continue working.

This can create an agentic workflow, in which LLMs autonomously work on complex, multistep tasks — finding tools relevant for the task, getting results from the tools, calling additional tools, and so forth — all without a human in the loop.

How MCP works

There are many resources for learning more about MCP, but we thought it would be helpful to give you a brief use case.

The technology and applications are changing rapidly, so rather than provide detailed instructions that might quickly become outdated, we instead focus on explaining how MCP works.

Use case: Real-time weather access

Imagine that you would like to build an AI application that suggests a clothing outfit for the user to wear. To provide a good suggestion, the LLM needs to consider the user’s style, available clothes, planned activities, and so forth, but also needs to determine the weather forecast for the user's planned locations.

Information about the user's preferences and activities could perhaps be obtained by the LLM through additional rounds of communication with the user, but it would be awkward if the user had to look up the weather forecast for each planned location.

So, you want the LLM to execute a workflow in which it queries external data sources for the weather forecast — which can be done through MCP – before it makes the outfit suggestion. (You could also potentially create MCP servers for some of the other functionality as well; for example, for the LLM to query another data source for the user's preferences, but let’s focus on the weather forecast in this case.)

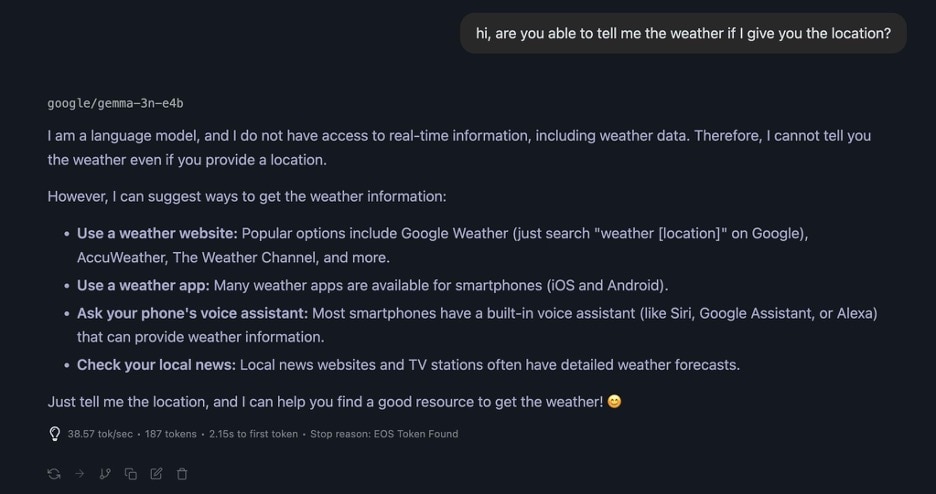

By default, as we've said, an LLM can't run code, and so it can't get access to the weather forecast. Figure 1 is an example of trying this using an LLM running locally.

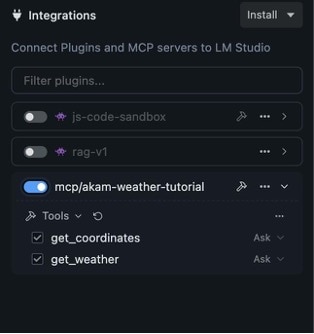

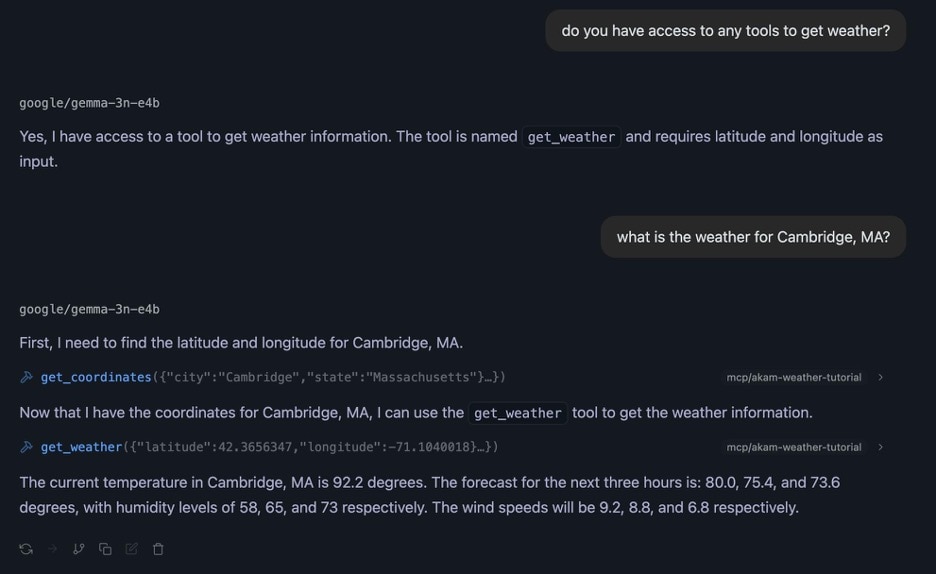

But, for this use case, you want the LLM to be able to get that information without involving the user. The solution is to equip an MCP server for weather so the LLM can get the real-time information itself (Figure 2 and Figure 3).

Note that, in this example, the LLM uses two separate MCP calls (get_coordinates and get_weather) to look up the forecast by location, but the LLM does not involve the user in this two-step workflow.

The LLM doesn't interact directly with the MCP server

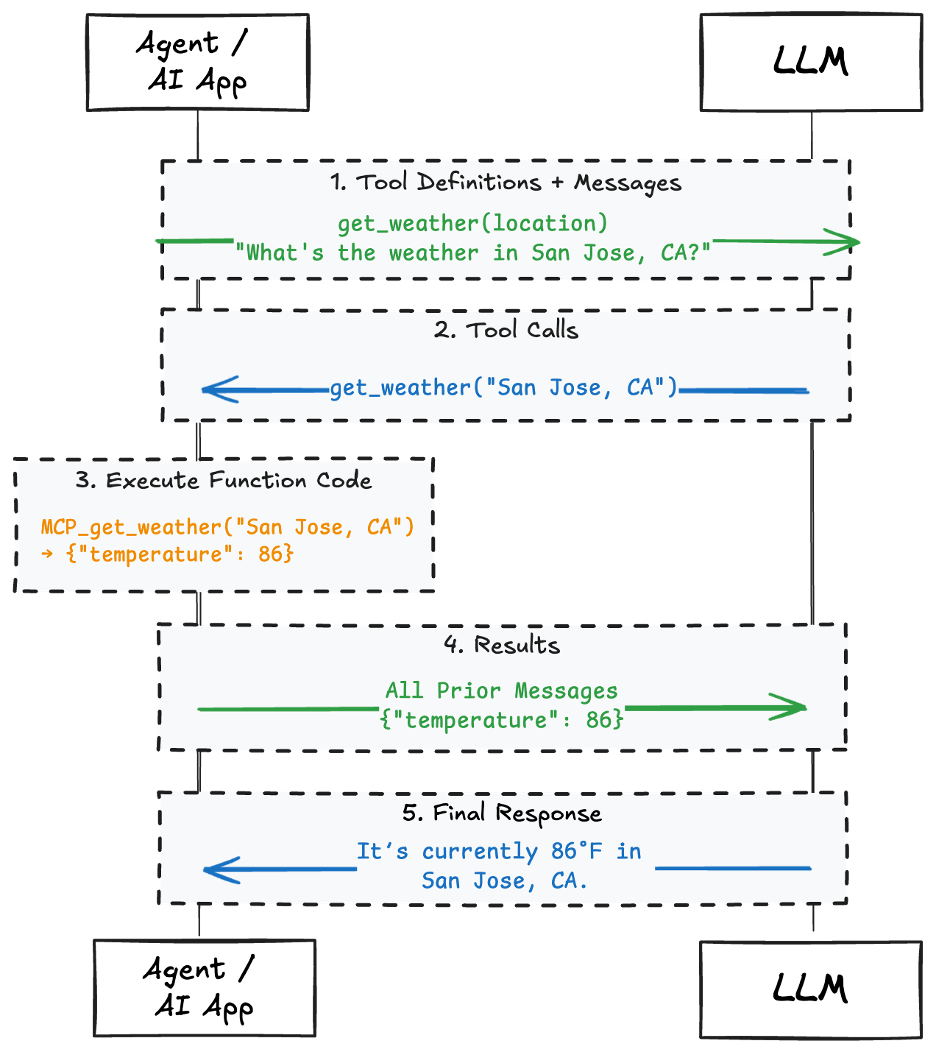

Even with Model Context Protocol, the input/output to/from LLMs remain text and only text — the LLM cannot directly invoke code or talk to the MCP server. Instead, when the LLM wants to invoke an MCP tool, it outputs JSON in a particular format corresponding to a JSON-RPC call.

The LLM host application — e.g., LM Studio — detects this specially formatted call, and instead of passing that output as text to the user, it invokes the MCP tool and provides the response back to the LLM without involving the user.

This means that neither the LLM (e.g., Claude), nor its hosting infrastructure (e.g., Anthropic) is given access to the user’s credentials. The information about which tools are available, and potentially how to call them, is passed as additional context from the application to the LLM (Figure 4).

A powerful addition

MCP can be a powerful addition to an LLM to enable more functionality, real-world use cases, and AI applications.

Tags