In 2024, NVIDIA announced its graphics processing unit (GPU)–accelerated prima-dual linear programming (PDLP) implementation: cuOpt. The announcement included benchmarking results for a set of problems from the MIPLIB benchmark library and claims that cuOpt is faster than the state-of-the-art CPU-based LP solver in 60% of the problems.

However, this new method comes with challenges. The initial announcement mentioned convergence issues and lower accuracy as some of the problems. Other sources, such as the HiGHS Newsletter and the FICO Blog, have echoed similar concerns.

What’s more exciting about cuOpt is their commitment to open source. Since the announcement, cuOpt has been open sourced and added to the COIN-OR repository, which also maintains a mirror.

In this blog post, I’ll provide you with a simple guide to get started with cuOpt and share my first impressions of the tool.

Finding a GPU

You guessed it: For the new technique, you need an NVIDIA GPU with CUDA support.

I use a Linode GPU instance with RTX4000 Ada GPU (I work at Akamai), which currently costs US$0.52/hour. I also use Linode Images to save money when the GPU is not in use. Linode Images allow you to delete and recreate the same instance without losing the NVIDIA drivers or disk data.

Installation

Installing cuOpt is pretty simple. However, this was my first time dealing with CUDA, so I had to search around a bit to get the right drivers installed.

To help you with installation, I prepared a small GitHub repository to share three scripts that you can simply copy-and-paste to get your machine working with necessary drivers and cuOpt installed.

These scripts are tested on an Ubuntu 24.04 Linode GPU instance. Installation takes approximately 15 minutes.

First try

I wanted to see the utilization of the GPU and CPU so I downloaded a good-sized problem from the MIPLIB benchmark set: trimtip1. The problem has approximately 30,000 variables and approximately 15,000 constraints.

I used the cuOpt CLI to solve the model:

cuopt_cli triptim1.mps --mip-absolute-gap 0.05 --time-limit 100

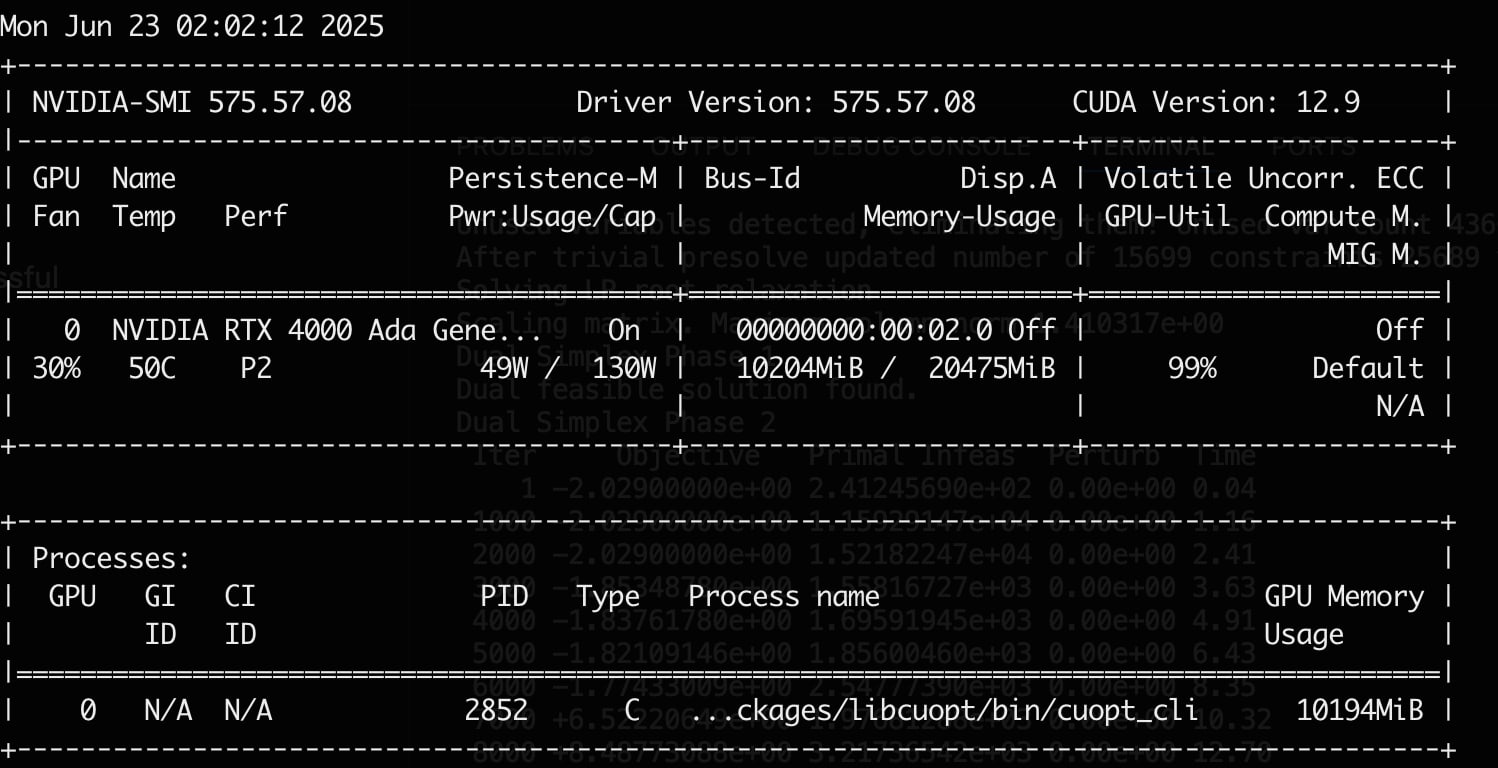

The GPU was under 100% load for the full duration of the solve (Figure 1).

Whereas the CPU was only using two cores of the 16 I had (Figure 2).

Doubling the GPUs

Over the past decade, CPU clock speeds have plateaued (generally staying under approximately 5 GHz), mainly due to power and thermal limits. This limited the improvements in mathematical solvers over the years, since most of the improvements are achieved by advancements in heuristics.

PDLP changed this by making use of parallelism to a much greater degree. The rapid growth of AI has shown how powerful parallelism can be, especially when scaling across GPU clusters. GPU-accelerated solvers are exciting because performance can now improve year over year because of hardware advancements, allowing models to scale faster over time.

I’ve been looking for some evidence that cuOpt scales well with simply using more (or faster) GPUs. One piece of evidence I found was from Mittelmann LP benchmark results on June 22, 2025, which tested six very large LP problems on two different GPUs and reported the time to solve each problem (in seconds; Figure 3).

RTX A6000 H100

---------------------------------------------

problem cuPDLP cuOpt cuPDLP cuOpt

=====================================================================

heat_250_10_500_200 13898 18904 7288 4611

heat_250_10_500_300 10184 11323 3696 4384

heat_250_10_500_400 5420 5124 3014 3233

mcf_2500_100_500 4859 14855 1166 1381

mcf_5000_50_500 5797 8403 2260 10134

mcf_5000_100_250 19584 8657 1461 2139

=====================================================================

dimensions constraints variables nonzeros

heat* 15625000 31628008 125000000

mcf_2500_100_500 1512600 126250100 253750100

mcf_5000_100_250 1775100 127500100 257500100

mcf_5000_50_500 2775050 126250050 253750050Fig. 3: The time (in seconds) to solve six very large LP problems on two different GPUs

These cards seem hard to compare, but in three different benchmarks (see one, two, and three) H100 performs at least two times better than RTX A6000. H100 was roughly five times more expensive than RTX A6000. Keeping this data in mind, the benchmark results from Mittelmann seem promising as H100 was between two times and six times faster than RTX A6000 (in all but one of the problems).

The problems Mittlemann chose in the example benchmarks are very large and may not apply to a lot of fields where mathematical solvers are used. It is hard to say what types of problems would benefit — and how much — from a larger GPU. I encourage everyone to try cuOpt with different cards and observe how the scaling works for their problem.

Now is the time

GPUs are changing the way we solve problems. Many problem domains have already benefited from GPU acceleration, and now it’s time for mathematical solvers to benefit, as well.

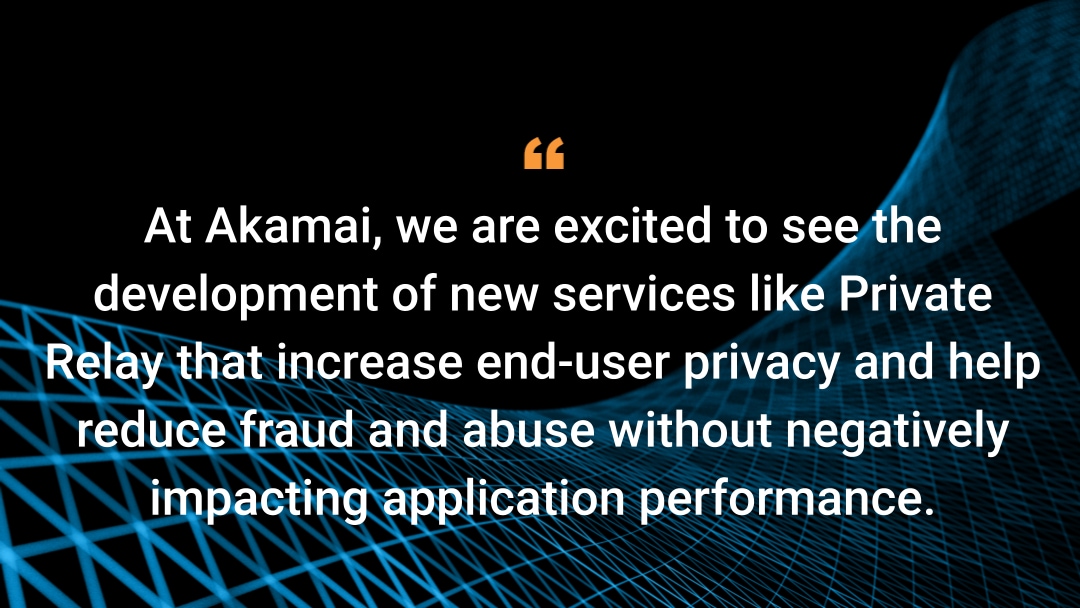

I appreciate NVIDIA’s strategy to open source cuOpt and advance the field with a strong product. I also expect that commercial solvers will aim to surpass cuOpt, ultimately providing users with even more powerful tools in the future.

Tags