For most large enterprises, that edge network is Akamai. With almost 4,400 points of presence (PoPs) embedded across 130+ countries, and roughly 300,000 servers, Akamai sits in front of the majority of Fortune 500 applications.

This internet front door has been in place for decades. It’s responsible for caching, applying firewalls, absorbing distributed denial-of-service (DDoS) attacks, and managing bots. If your team is programming the edge with Akamai EdgeWorkers or other edge compute, the existence of this edge is not news.

But there’s been a change recently in what the internet’s front door can do. And it’s probably time to reassess your strategy.

The edge has traditionally been narrow

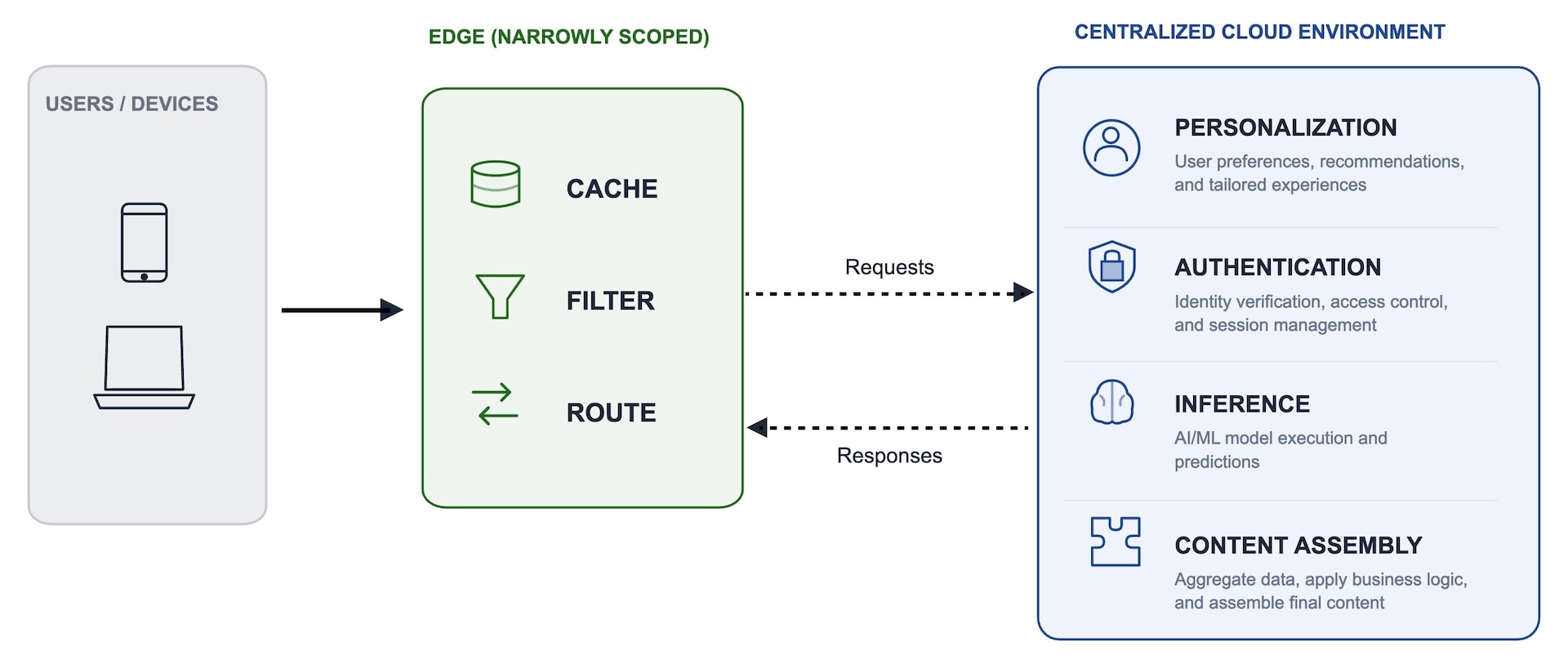

Until recently, what the edge could do was narrowly scoped. It cached, it filtered, and it routed. The remainder of the work (such as personalization, authentication, inference, and content assembly) continued to flow back to a centralized cloud environment (Figure 1).

This architecture was appropriate and defensible because application complexity was relatively low and user expectations were simple. But complexity and expectations have changed. And along with these changes has come an architectural shift from monolithic to distributed, allowing application logic to run closer to the end user. A narrow edge with heavy centralized request handling is a difficult decision to defend today.

Why centralized request handling is outdated

Several factors in today’s landscape have changed the cost-benefit analysis of using centralized request handling, including:

- Latency translates directly to revenue

- Bots are a majority of traffic

- Capacity is a constant cost

Latency translates directly to revenue

The Deloitte and Google study titled Milliseconds Make Millions, which spans 37 brands and 30 million sessions, found that just a 0.1-second improvement in performance created an 8.4% improvement in retail conversions and a 9.2% improvement in order value.

The same latency improvement (0.1 second) drove a 10.1% conversion-increase in travel and a 21.6% improvement in lead generation. Amazon's long-standing internal benchmark places every 100 milliseconds of latency at approximately 1% of revenue.

When a request makes a round trip to the origin for a decision that could have been made at the edge, the business pays twice: once in performance degradation and again in lost revenue.

The standard answer has been to add regions. But for a global business, the trade-off between ocean transit latency and the cost of another region (and segregate data) is a difficult one.

Bots are a majority of traffic

According to the 2026 State of the Internet (SOTI) Security report, Protecting Publishing: Navigating the AI Bot Era, AI bot activity surged by 300% in 2025 — and bots now account for 51% of global web traffic.

This traffic consists of both bots that an enterprise wants to serve (search indexers, personal AI agents, and selected AI crawlers) and bots it does not want to serve (scrapers, price surveillance, low-value automation). Bad bots now make up nearly two-thirds of bot traffic and 37% of all internet traffic.

It’s expensive to classify these bots at the origin; you are essentially paying a bot tax on your cloud bill.

Capacity is a constant cost

In theory, cloud infrastructure quickly scales to absorb traffic spikes. In practice, container-based scaling is too slow to react to a surge. By the time new capacity comes online, the spike has already passed.

The work-around is preprovisioning and overprovisioning: Enterprises keep clusters running at high capacity for a peak that may or may not arrive. In fact, surveys show that Kubernetes clusters that sit idle waste between 30% and 65% of the resources companies pay for since idle use is billed at 100%.

Egress compounds the problem: One hyperscaler, for example, charges US$0.09 per GB, plus another US$0.045 per GB for traffic crossing a NAT gateway. Gartner estimates that clients spend 10% to 15% of their cloud bills on egress costs, with 30% to 40% on data-intensive workloads.

Add in viral traffic events, flash ecommerce moments, and AI-crawler surges … and your costs scale faster than revenue.

These factors have shifted the conversations that are questioning where work should be executed. The answer is that work should be moving toward the edge.

The evolution of the modern connected edge

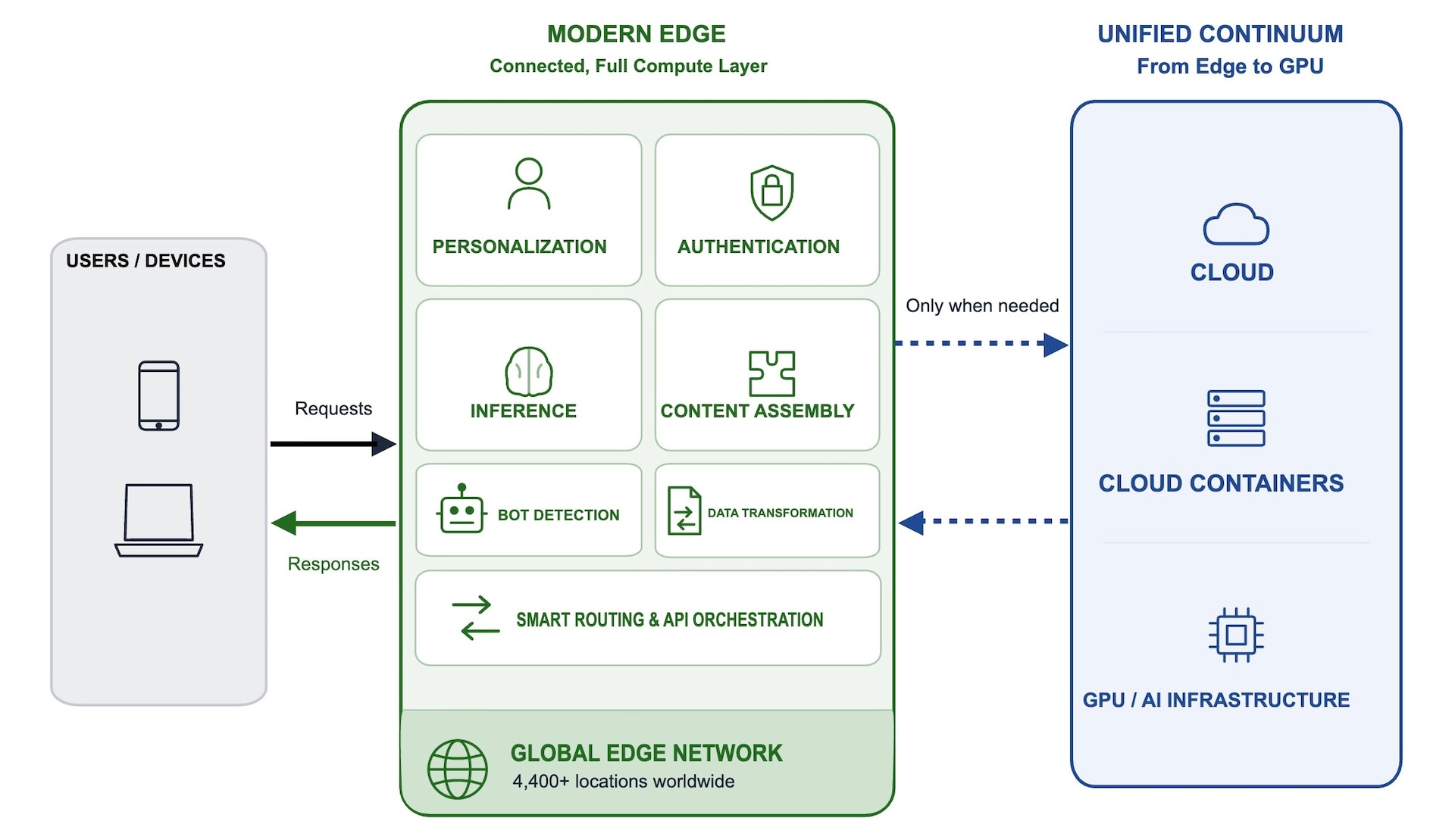

The modern edge has evolved. It has become a connected, full compute layer (Figure 2). Processes that once required a round trip to a centralized region can now be made at the point of ingress. This effectively addresses both the latency and cost problems we just discussed.

Support complex application logic at ingress

The modern edge is now smarter. It has evolved from simple isolated scripts to full modular applications that manage everything from real-time data transformation to complex API orchestration. By moving these services to the point of ingress, enterprises can stop using specialized, legacy infrastructure to maintain middleware layers.

With this model, latency costs are greatly reduced: personalization, routing, and content assembly that made a round trip in 200+ milliseconds to a centralized region now completes the trip in less than 50 milliseconds. Work that terminates at the edge doesn’t need a centralized scale-up.

Create a smart glue of integrated logic

Modern edge logic serves as the connective tissue between systems, allowing bot detection to trigger real-time content rewriting and security classifications. By executing application logic at the front door, enterprises can unify their security controls, delivery infrastructure, inference, and core compute into a single, synchronized workflow.

This is where the bot tax shrinks: Classification and content-shaping happen inline with the request, before the origin sees the traffic. The cloud bill no longer covers the cost of the bad bots.

Implement a unified continuum from edge to GPU

The edge now extends beyond simple PoPs. It can offer a single deployment model running from almost 4,400 global edge locations to cloud containers and high-performance GPU inference environments.

Not every workload belongs at the edge, but the ones that do can seamlessly communicate with core compute and AI models.

Adopt portability through open standards

To avoid the lock-in of legacy cloud providers, the modern edge is built on portable, open standards that allow teams to write code in the languages they already use, such as JavaScript, Python, Rust, or Go. With this flexibility, developers can move functions between environments without expensive rewrites.

Akamai Functions is a smarter, more connected edge

For years, the promise of edge computing was hampered by proprietary languages, sluggish start times, and fragmented workflows. Akamai Functions represents the moment at which these barriers have finally been removed.

Akamai Functions is an edge native serverless platform that runs globally across Akamai Cloud. It allows enterprises to run full application logic directly at the edge and integrated with Akamai's compute, security, and AI services.

This is important for three reasons:

One of the greatest historical risks of the edge was runtime lock-in, where code written for one provider was useless elsewhere. But by allowing your team to write in common languages they already know (like JavaScript, Python, Rust, or Go) and by using WebAssembly, Akamai Functions ensures that your logic isn't tied to a single vendor’s computer stack.

We’ve moved past the era of isolated scripts. Akamai Functions serves as the intelligent glue that bridges the gap between security controls, content delivery, centralized cloud compute, and AI inference. A single request at the front door can trigger a chain of events, such as authenticating, checking a security profile, and fetching an AI-driven recommendation, all before the request leaves the edge network.

- Akamai Functions gives you a fast, unified execution model. The same deployment model that powers your edge locations now extends directly into centralized cloud containers and high-performance GPU environments. With sub-millisecond cold starts, the performance penalty of serverless has been eliminated, ensuring that important and latency-sensitive logic is executed at the ingress — as close as possible to the end user.

What’s running at your front door?

The front door you already have is doing important work. But in a world of surging egress costs and AI-driven traffic, you should be asking: “What decisions are we still sending to the origin that could be made at the edge?”

The companies that are gaining a competitive edge are moving beyond simple caching to embrace a more connected perimeter. They're using Akamai Functions to shift high-frequency tasks to the edge, including:

Mass redirects at global scale. Improve performance and reduce origin load with ultra-fast redirects at the edge.

Token management. Protect content globally by issuing, validating, and revoking tokens at the edge.

Bot misdirects. Intercept and control bot traffic at the edge without breaking SEO or impacting real users.

Hyperpersonalization. Optimize and route dynamic content by location to improve relevance and performance.

With Akamai Functions, the front door is an intelligent, connected entrance that dramatically reduces origin load.

Akamai Functions: A smarter, more connected edge

Ready to move your apps to the smarter, more connected edge? View the Functions Quick Start Guide in TechDocs.

Tags