Akamai has only milliseconds to apply detection algorithms and decide whether a user request to a protected website is malicious. These detections rely on machine learning (ML) models and heuristic analyses, both probabilistic by nature, operating within a constantly evolving cyberattack landscape. False negatives (FNs) and false positives (FPs) are therefore inevitable.

To remain effective, deeper analyses — such as model evaluation, tuning, retraining, and detection refinement — must occur outside the real-time request processing path.

Traditionally, this “second line of defense” was handled by data scientists who developed and maintained models through highly manual craft-like processes. These workflows were often ad hoc, loosely defined, nondeterministic, and prone to error. As a result, changes could easily introduce new or different FNs and FPs, requiring lengthy monitoring periods before being safely enabled in production.

Establishing a common security machine learning operations (MLOps) platform, with a well-defined technology stack, unified data access, and formalized real-time support processes, has significantly improved serviceability, reliability, and overall efficacy.

Yesterday: The birth of Akamai security MLOps

Even after more than 15 years in the mainstream, the machine learning ecosystem remains highly fragmented. A wide variety of frameworks, libraries, and tools can be used to achieve the same, often complex, goals in very different ways.

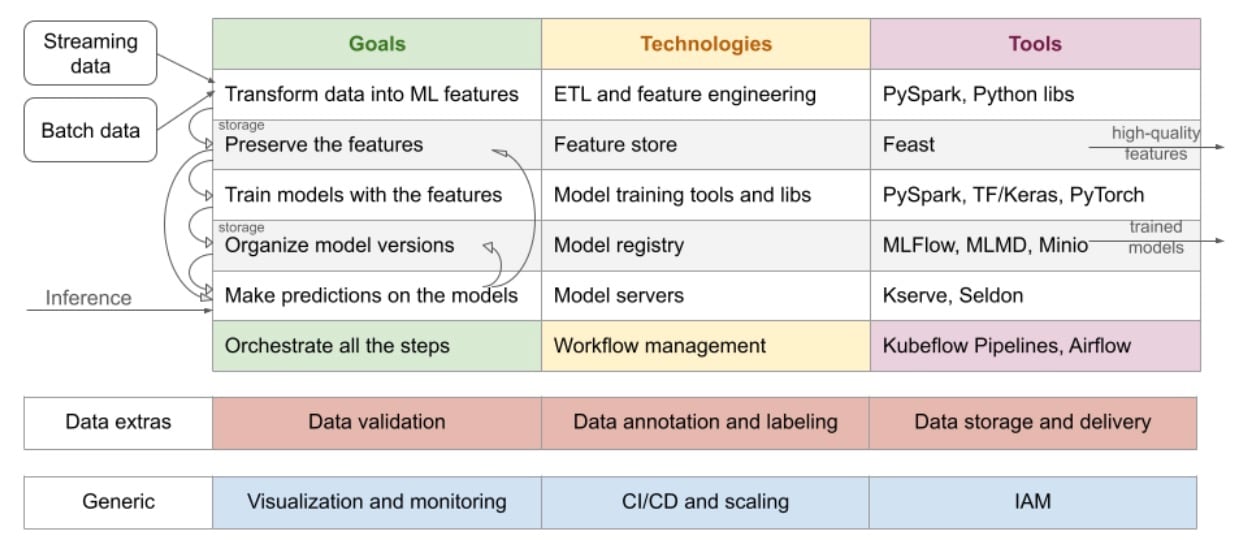

Fortunately, the operational steps of ML applications are relatively consistent and well understood (Figure 1).

This makes them suitable for generalization and standardization as the foundation of an MLOps platform, even when individual steps may support multiple equally popular technology choices (Figure 2).

This robust unified MLOps platform allows the production of ML solutions with greater speed, reliability, and scalability. It bridges the gap between model development, tuning, and deployment; streamlines ML applications lifecycle management; ensures reproducibility; enforces governance; and fosters collaboration among teams.

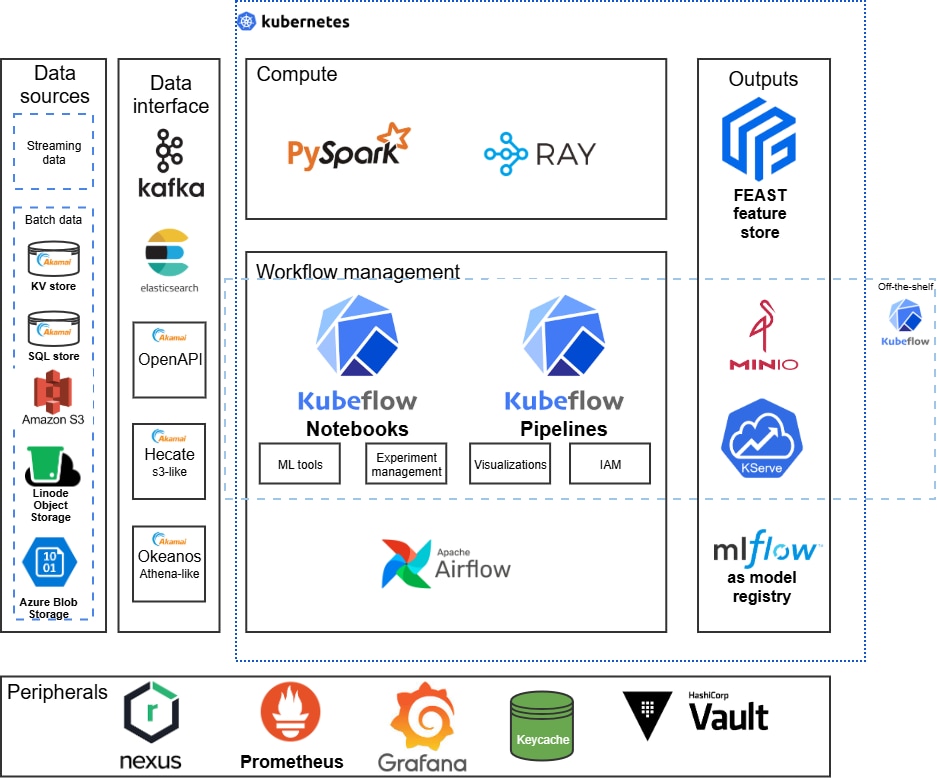

At the heart of the platform lies Kubeflow, an open source Kubernetes-native toolkit for running ML workflows. Kubeflow provides an end-to-end infrastructure stack for data management, job training, hyperparameter tuning, model serving, and monitoring — all containerized and orchestrated on Kubernetes.

The platform ensures unified access to data sources — whether they reside in object storage, data lakes, relational databases, or streaming platforms — thanks to connectors available through Spark, Kafka, and native integrations. This allows data scientists and engineers to avoid data silos and build features and models on consistent, versioned data layers.

Additionally, by using Kubernetes namespaces and Kubeflow profiles, the platform supports multi-tenancy out of the box, offering teams isolated workspaces with their own resources, secrets, pipelines, and dashboards. This greatly simplifies user management, access control, and resource governance across teams.

How to enhance Kubeflow’s capabilities

To enhance Kubeflow’s capabilities, we incorporate the following major technologies:

MLflow: Model tracking and registry

Apache Spark: Distributed data preprocessing and feature engineering

Ray: Scalable Python-based distributed training and hyperparameter optimization

Elasticsearch: Indexed data storage and search analytics

HashiCorp Vault: Secure secret management, including API keys, credentials, and tokens

Sonatype Nexus: Artifact repository for ML models, data snapshots, and dependencies

Apache Kafka: Event streaming for real-time data ingestion and monitoring

Together, these components form a cohesive, enterprise-grade MLOps platform. Each tool contributes distinct strengths, while Kubeflow acts as the orchestration layer that unifies and scales the entire ML lifecycle.

By integrating these technologies, the platform provides:

Unified access to diverse data sources, ensuring consistency across ML workflows

Built-in multi-tenancy for secure and collaborative team operations

Streamlined paths from experimentation to production deployment and monitoring

This modern MLOps foundation empowered Akamai to scale machine learning confidently and efficiently across teams, use cases, and environments. In the era of AI-driven security, robust MLOps infrastructure is essential.

Today: Have MLOps, use it

MLOps significantly shortens the distances between research, experimentation, and productization. However, these phases remain distinct, each with its own actors, goals, and requirements.

Research use case

A researcher may need to quickly validate a mitigation idea. Instead of manually extracting, cleansing, and analyzing data locally, and managing results with custom scripts, the researcher can assemble existing components into a Kubeflow notebook or pipeline with just a few lines of code. Results, artifacts, and visualizations are automatically preserved with configurable retention policies.

Experimentation use case

Once an idea shows promise, it must be tested in a more structured environment. The standardized MLOps setup enables workflow scheduling, feature and artifact storage, model serving, and seamless integration with platform-provided ML add-ons. This allows results to be reliably preserved and analyzed over time.

Productization use case

Even validated solutions often require further optimization before full production rollout. For example, a workflow may achieve better performance when executed directly on a Ray cluster rather than through Kubeflow Pipelines. Productization may also require custom visualizations, integration with external tools, or alternative serving patterns, all of which are supported by the MLOps production cluster.

Second line of defense: Workflow perspective

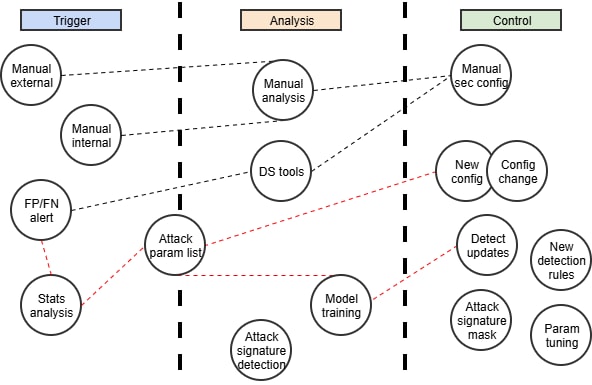

Our support services, the second line of defense, can be broken down into three core steps:

Gather feedback: Detect FPs and FNs

Analyze feedback: Identify insights and attack patterns

Act on insights: Adjust or extend primary detections

These map naturally to three generalized MLOps workflow stages:

Trigger

Analysis

Control

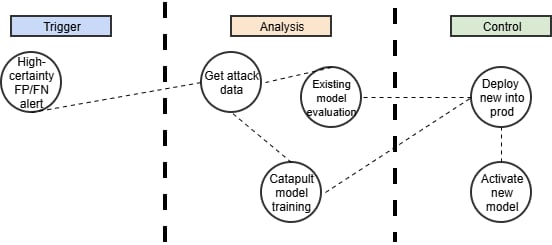

Each stage can be implemented using one or more Kubeflow Pipeline components, which can then be flexibly composed into workflows with minimal customization (Figure 3).

Then components can be grouped in the necessary workflows just with no or little adjustments.

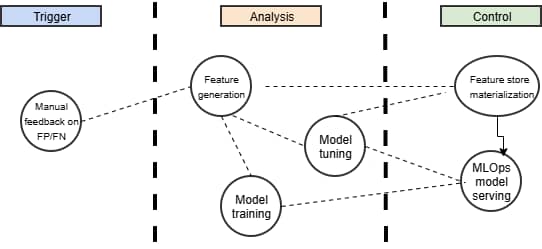

Example 1: Manual feedback loop

A user reviewing mitigated traffic in the Akamai Control Center identifies an FP or FN, which acts as a manual external trigger. This feedback is submitted in real time into a high-quality, labeled ground-truth dataset via MLOps-controlled interfaces.

The data is then transformed into features, materialized in the feature store, and used to trigger model evaluation, tuning, or retraining.

Finally, an improved model version is deployed to the MLOps model server, enhancing inference accuracy for future requests (Figure 4).

Example 2: Automated retraining

For certain endpoints, such as those involving username submission, FP and FN detection can be performed automatically with high confidence. In this case, retraining of a catapult model used in our primary detection layer can be triggered in real time.

Existing models are evaluated against recent attack traffic. If no satisfactory model is found, a new one is trained. When a more effective model emerges, it is automatically deployed to production, improving inference for subsequent requests for that account (Figure 5).

This three-step workflow approach — trigger, analysis, and control — has proven highly effective for addressing a wide range of serviceability challenges. By hosting these solutions on the MLOps platform, teams automatically benefit from built-in capabilities, such as unified data access, validation, storage, visualization, CI/CD, and identity access management (IAM). All of this significantly improves ML solution time to market.

Tomorrow: GenAI is just a step away

Akamai is rapidly adopting, and developing its own, generative AI (GenAI) technologies. Engineer productivity on our security MLOps platform has already improved significantly with Aether, our internal AI chatbot. Aether is trained on platform documentation, interfaces, and best practices, and is always available to answer questions and suggest solutions.

Our security MLOps is evolving into a scalable framework capable of managing the growing complexity of modern AI systems, including large language models (LLMs) and autonomous agents. What began as MLOps for traditional ML models is expanding into:

LLMOps for managing and tuning GenAI models

AgentOps for deploying, testing, monitoring, and governing autonomous agents

This expansion is complementary to Akamai’s dedicated GenAI platforms:

MLOps supports tuning and evaluation of underlying LLMs as a core component of LLMOps.

When placed behind MCP servers, MLOps pipelines enable AI agents to access tools and data beyond their internal capabilities, forming the foundation of our security AgentOps.

MLOps workflow management governs the full agent lifecycle from deployment to monitoring.

Together, LLMOps and AgentOps, built on MLOps, provide the foundation for scaling ML workflows, rapidly deploying robust AI agents, and managing them safely in production.

The future is exciting

The transition from MLOps to LLMOps and AgentOps represents a major expansion in how we apply AI. As our organization embraces increasingly autonomous and powerful models, this operational framework enables predictive insights, conversational agents, and autonomous problem-solving at scale.

By investing in these AI operational foundations, we’ve been able to optimize internal processes, improve customer experiences, and drive sustained innovation.

The future of intelligent security operations is already being built.

Learn more

To learn more about how Akamai is investing in AI, and how to protect your business with Akamai security solutions, contact an expert.

Tags