Edge computing is a technology that processes data close to where it is generated, such as on edge devices like sensors or IoT gadgets, rather than sending it to distant cloud infrastructure or data centers. This approach reduces latency, saves bandwidth, and allows for faster, more efficient decision-making in real-time applications.

In today’s technology-driven world, the need for quick and efficient data processing has skyrocketed. This demand is leading to the rapid adoption of edge machine learning, or edge ML, a revolutionary way of using machine learning on edge devices like smartphones, sensors, and IoT gadgets. Unlike traditional cloud computing, where data is sent to a cloud infrastructure for processing, edge ML allows data to be processed closer to its source, enabling faster real-time responses for better decision-making.

What is edge ML?

Machine learning (ML) is a branch of artificial intelligence that enables computers to learn from data without being explicitly programmed. Instead of relying on fixed instructions, ML systems use datasets to identify patterns, make decisions or predictions, and improve their performance. For example, ML powers applications like voice recognition, image classification, and personalized recommendations by analyzing data and learning over time.

Edge ML is the use of machine learning models and algorithms directly on devices at the “edge” of the network, such as IoT devices, microcontrollers, or smartphones. This contrasts with traditional approaches, where data is sent to distant data centers or the cloud for analysis. With edge ML, edge devices perform tasks like recognizing patterns, making predictions, or analyzing data locally. By leveraging the computational power of these devices, edge ML delivers results quickly and efficiently without needing to rely as heavily on cloud systems.

For example, an IoT device in a smart home might use edge AI to adjust the temperature or turn off lights based on user habits. This is done without sending the information to a remote server, which ensures faster responses, enhanced security, and better scalability. This approach makes processing data more efficient and opens up a world of possibilities for real-time applications across industries.

Why edge ML matters

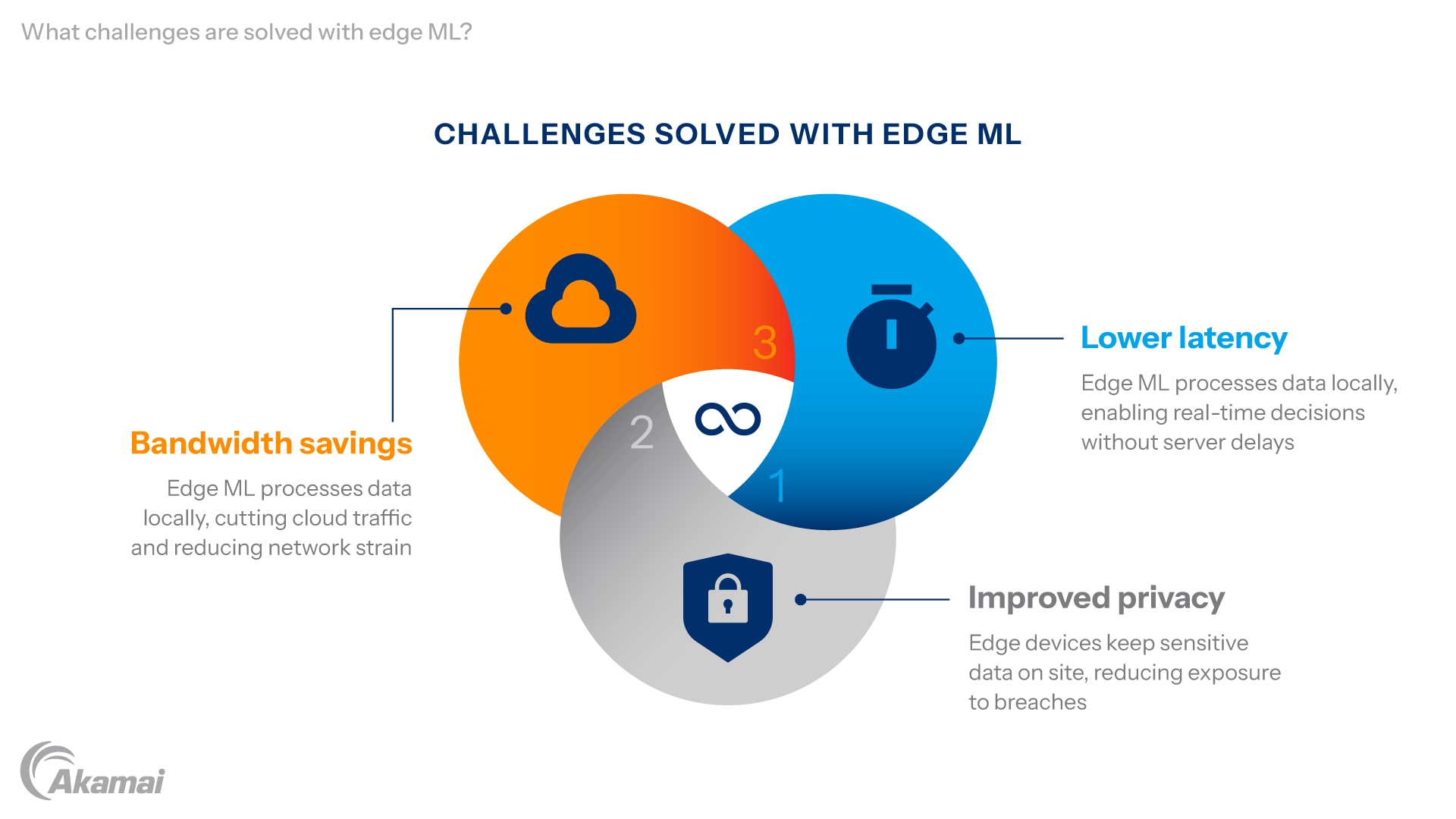

Edge ML solves several challenges posed by traditional cloud computing and centralized machine learning setups.

Lower latency: By processing data locally, edge ML eliminates delays caused by transmitting information to remote servers. This is crucial for real-time applications like autonomous driving or industrial automation, where even a slight delay can have serious consequences.

Improved privacy: When data is processed on edge devices, sensitive information stays on site, reducing the risk of breaches. For instance, healthcare devices that monitor vital signs can analyze patient data without sending it to external servers.

Bandwidth savings: Sending large volumes of raw data to the cloud can strain network resources and increase costs. By analyzing data or certain portions of it locally, edge ML minimizes bandwidth usage. This approach also reduces dependence on traditional networks, improving both speed and privacy.

How edge ML works

Edge ML requires several steps to efficiently process data on edge devices.

Data collection: IoT devices, sensors, and other sources gather data locally. For instance, in an industrial setting, sensors might track vibrations or machinery temperatures to detect early signs of wear and tear. Images are also commonly collected as a type of data for training edge ML models, especially in computer vision applications. This data collection is the foundation of machine learning models, as accurate and abundant datasets are crucial for meaningful analysis. The data must be cleaned, preprocessed, and reformatted to make it useful for training the edge ML model. Edge devices often use local storage to temporarily hold collected data before processing or syncing with the cloud.

Model training and deployment: Once the data is ready, the next step is to create and train the machine learning model. This involves choosing the right method, like deep learning or support vector machines, and setting up the model’s structure, typically using cloud-based frameworks like TensorFlow, AWS, or other open source platforms. This AI training process might happen on a powerful server or directly on the device, depending on the resources available. After training, the model is fine-tuned to perform its tasks well and is ready for deployment on edge devices. The process to deploy models to edge devices often includes over-the-air updates, allowing for efficient rollout of new models as system requirements evolve.

Model optimization and deployment: After training, the model is optimized to ensure it runs efficiently on the device’s limited computational power and firmware. This optimization process includes techniques like model compression, quantization, or pruning to reduce the size of the model without compromising its accuracy. Additional methods such as model distillation and designing efficient architectures are used to further improve the model’s performance on resource-constrained devices. The goal is to make the AI models lightweight enough to function seamlessly on devices such as microcontrollers or IoT gadgets. A trained machine learning model is then deployed to the edge device.

Inference and decision-making: Once deployed, the edge device uses the machine learning model to process real-time data and make decisions. This step, known as machine learning inference, involves analyzing the collected data to identify patterns, detect anomalies, or make predictions. Edge devices perform inference locally to generate actionable outputs in real time, even in environments with limited connectivity. For example, in a factory, the device might detect unusual vibration patterns indicating a potential equipment failure. The insights are then delivered directly to users as alerts or automated responses, like shutting down a machine to prevent damage. This real-time inference ensures swift actions and eliminates delays caused by transmitting data to the cloud.

The benefits of edge ML

Edge ML offers a range of benefits that improve both performance and the user experience.

Real-time processing: One of the most significant benefits of edge ML is its ability to process data almost instantaneously. By performing analysis and inference locally, edge ML eliminates the delays caused by sending data to and from cloud servers. This is particularly critical for applications requiring split-second decisions, such as computer vision in self-driving cars, where detecting and reacting to road obstacles must happen without delay. Similarly, in virtual assistants, language processing can take place on the device, making the response time faster and more natural for users. This ability to deliver fast, real-time results greatly improves the user experience, creating systems that are more efficient, intuitive, and responsive to human needs.

Scalability: Edge ML allows organizations to expand their operations more efficiently by reducing reliance on centralized data centers. In a traditional cloud computing setup, scaling requires significant investment in storage, network infrastructure, and computing resources to handle larger workloads. By offloading data processing tasks to edge devices, companies can deploy thousands or even millions of IoT devices without overwhelming their cloud infrastructure. This scalability makes it easier and more cost-effective to roll out smart solutions across diverse locations, such as deploying smart sensors in urban environments or expanding predictive maintenance systems in manufacturing plants.

Energy efficiency: Processing data locally at the edge significantly reduces the energy requirements associated with transmitting and processing large volumes of raw data in the cloud. Cloud workloads typically demand extensive resources, from cooling large-scale servers to powering data transport. In contrast, edge ML uses the computational power of smaller devices to handle tasks closer to the data source. This approach minimizes energy consumption and is particularly beneficial for energy-conscious industries like renewable energy management or remote IoT applications with limited power supplies. By reducing dependency on energy-intensive cloud processes, edge ML contributes to more sustainable technology solutions.

What are real-world use cases for edge ML?

Edge ML is transforming industries through a variety of applications.

Predictive maintenance: In manufacturing, IoT devices equipped with sensors gather real-time data about machinery, such as temperature, vibrations, and pressure. Edge ML processes this sensor data to predict potential equipment failures before they occur. By identifying patterns and anomalies in machine behavior, organizations can perform predictive maintenance rather than reacting to breakdowns. This reduces unplanned downtime, extends the lifespan of equipment, and significantly lowers maintenance costs, ensuring smoother production workflows.

Smart cities: Edge ML is a game changer for creating smart cities, where technology improves urban living. Traffic sensors and smart streetlights powered by edge computing analyze data in real time to optimize traffic flow, manage congestion, and enhance road safety. Similarly, environmental sensors monitor air quality and noise pollution, enabling city planners to make informed decisions. This localized data processing also helps optimize energy use in buildings and public spaces, promoting sustainability while enhancing the quality of urban life.

Healthcare: In the healthcare industry, wearable devices and smart monitors utilize edge ML to provide patients and doctors with instant insights. For example, wearables like fitness trackers analyze heart rates, sleep patterns, and other vitals using on-device models. More advanced applications include devices that detect irregular heartbeats, monitor glucose levels for diabetics, or provide early warnings for medical emergencies. By enabling real-time monitoring, edge ML improves patient outcomes and reduces the burden on healthcare facilities.

Retail: Edge ML enhances the retail experience by making shopping more personalized and efficient. Edge AI systems in stores analyze customer behavior through cameras and sensors, offering tailored recommendations based on browsing or purchasing habits. Smart checkout systems powered by computer vision and language processing streamline payment processes, eliminating the need for traditional cash registers. These solutions improve customer satisfaction while reducing operational costs for retailers.

Agriculture: Farmers are leveraging edge ML to optimize crop production and resource management. Devices like drones and soil sensors collect data on soil moisture, nutrient levels, and weather conditions. This data is processed locally using machine learning models to make predictive recommendations, such as when to irrigate or apply fertilizers. Edge ML also supports automation, such as directing robotic systems to harvest crops or manage pests, making agriculture more efficient and sustainable.

Automotive: Edge ML is critical for enabling real-time decision-making in autonomous vehicles. Sensors and cameras in cars use computer vision and deep learning to detect obstacles, read road signs, and navigate traffic conditions. By processing this data locally, vehicles can make split-second decisions necessary for safe driving. This localized capability reduces latency and ensures reliability, even in areas with poor connectivity, such as remote roads.

Energy: The energy sector benefits from edge ML in managing distributed energy resources like solar panels, wind turbines, and smart grids. Devices equipped with edge ML process real-time data from energy sources to predict fluctuations in supply and demand. This helps optimize power distribution and storage while minimizing energy waste. In addition, smart meters analyze household energy usage, allowing users to make informed decisions to reduce consumption and costs.

Finance: Financial institutions are leveraging edge ML for enhanced security and customer experience. ATMs and point-of-sale systems use on-device models to detect fraudulent transactions by analyzing patterns in transaction metrics. Similarly, mobile banking apps use edge ML for real-time verification of user identity through facial recognition or biometric data. This localized approach reduces reliance on cloud systems, ensuring faster and more secure services.

Entertainment: In entertainment, edge ML improves streaming and gaming experiences by optimizing bandwidth usage and reducing latency. For instance, video streaming platforms use edge ML to predict user preferences and preload content locally to reduce buffering. Similarly, gaming consoles and VR headsets process real-time data to deliver seamless gameplay, offering a smoother user experience.

Logistics: Edge ML is transforming logistics by enabling smart and automated supply chains. Sensors in warehouses track inventory levels and movement, while delivery vehicles use edge ML to optimize routes in real time based on traffic conditions. This reduces delivery times and fuel costs. Additionally, predictive maintenance on delivery vehicles ensures smoother operations and fewer delays in supply chain management.

What are the challenges of managing edge ML?

Despite its many benefits, implementing edge ML comes with several challenges that must be addressed to unlock its full potential. When integrating machine learning with IoT devices, potential issues such as data transmission, latency, and resource constraints can arise, impacting performance and reliability.

Hardware dependency: Many edge devices have limited computational power, memory, and battery life, which makes running complex deep learning models a significant challenge. Developers must use advanced optimization techniques, such as model quantization or pruning, to ensure that AI models can run efficiently on these constrained devices without sacrificing too much accuracy.

Model optimization: Balancing performance and accuracy when optimizing machine learning models for edge deployment is a delicate process. This involves shrinking model sizes while retaining their ability to process real-time data effectively, which can require considerable expertise and experimentation to achieve consistently reliable results.

Scalability and MLOps: Deploying and managing ML models across thousands of edge devices introduces complexities that require robust pipelines and management frameworks. Tools from companies like Microsoft and Amazon help address these scalability issues, but implementing them often demands specialized knowledge and resources, making the process time-consuming and expensive.

What will be the future trends in edge ML?

The future of edge ML is promising, with advancements in hardware, tools, and workflows enabling more powerful and efficient applications.

Specialized hardware: Innovations in hardware are making edge ML devices more capable and efficient. For instance, state-of-the-art chips like neural processing units (NPUs) are specifically designed to handle learning algorithms locally, allowing for faster and more energy-efficient data processing on the device itself.

Open source tools: Open source platforms such as LiteRT simplify the development of end-to-end edge ML solutions. These tools provide pretrained models and frameworks that developers can customize and deploy, lowering the barriers to entry for organizations looking to adopt edge ML technologies.

Seamless workflows: Comprehensive platforms like AWS are offering step-by-step tutorials and pre-built solutions to streamline edge ML deployment. These tools improve the user experience by simplifying the setup process and ensuring that systems can operate efficiently across diverse industries, from healthcare to retail. Efficient deployment of a new model is a key part of the edge ML lifecycle, enabling rapid updates and continuous improvement.

Frequently Asked Questions

Edge ML refers to running machine learning models directly on edge devices, enabling them to analyze data locally without sending it to the cloud. Unlike traditional machine learning, which depends on cloud computing for data processing, edge ML offers faster responses and enhanced privacy since data doesn’t leave the device.

Edge ML is commonly paired with IoT devices to create smarter systems. For example, it powers predictive maintenance in factories, where sensors on equipment detect issues early, or it enables smart home automation, such as adjusting lighting or temperature based on user behavior.

Edge ML plays a critical role in the Internet of Things (IoT) by delivering real-time insights and making devices more autonomous. It reduces reliance on cloud infrastructure, ensuring IoT systems can function effectively even in areas with limited or unreliable internet connectivity.

Why customers choose Akamai

Akamai is the cybersecurity and cloud computing company that powers and protects business online. Our market-leading security solutions, superior threat intelligence, and global operations team provide defense in depth to safeguard enterprise data and applications everywhere. Akamai’s full-stack cloud computing solutions deliver performance and affordability on the world’s most distributed platform. Global enterprises trust Akamai to provide the industry-leading reliability, scale, and expertise they need to grow their business with confidence.